Call of Duty Modern Warfare II

We worked closely with Infinity Ward, Blur Studio and Activision to deliver the facial animation for Call of Duty: Modern Warfare 2, one of the world’s the best-selling video games. Having previously delivered twenty-five minutes of facial animation for the cinematics in Call of Duty: Modern Warfare (2019), we reached a new level for MW2 using our enhanced digital double pipeline, PURE4D, to process over four hours of facial performance data for both cinematics and in-game animation. It’s our largest-scale project to date.

Activision

Infinity Ward

Blur Studio

PS4

PS5

XBOX ONE

Xbox Series X

PC

28 October 2022

4D capture for improved digital double facial rig creation.

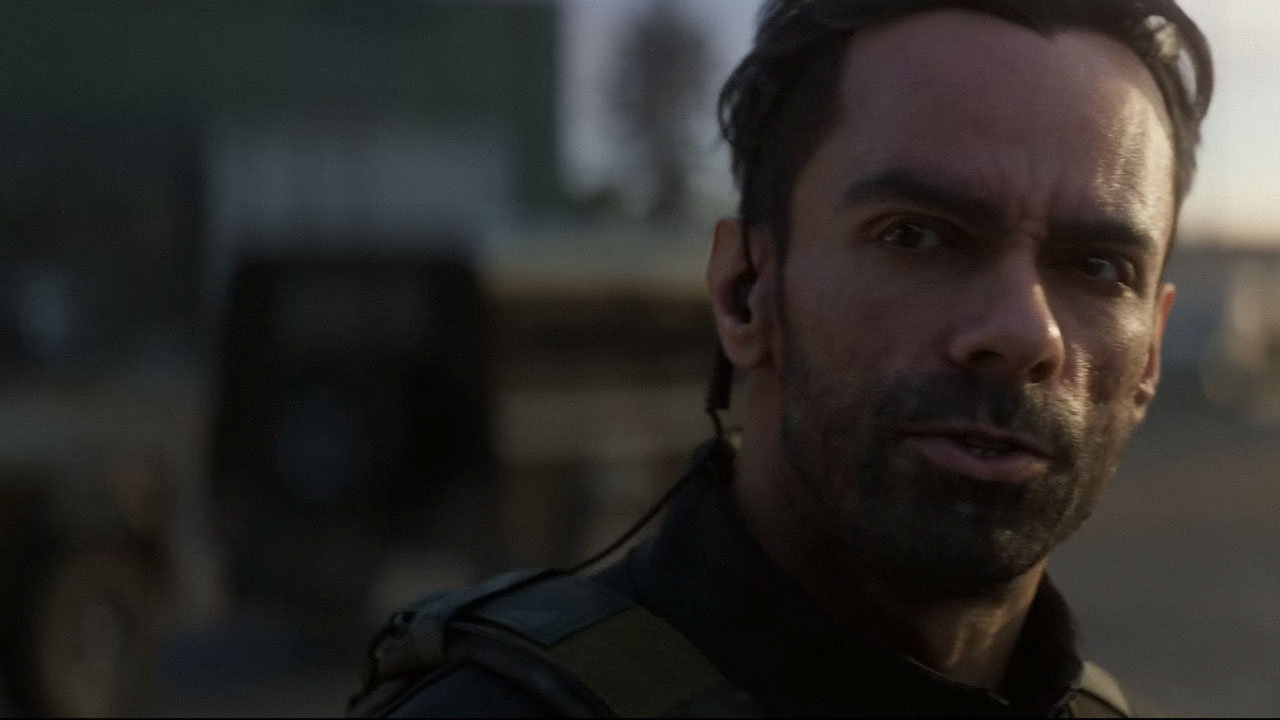

In order to obtain the highest possible level of graphical realism, Infinity Ward opted for the in-game characters to be performance-driven digital doubles of real actors. This includes Colonel Alejandro Vargas who is played by Alain Mesa. Vargas is a Tier One Mexican Special Forces Operator, leading Los Vaqueros and an allied member of the elite counter-terrorism unit Task Force 141.

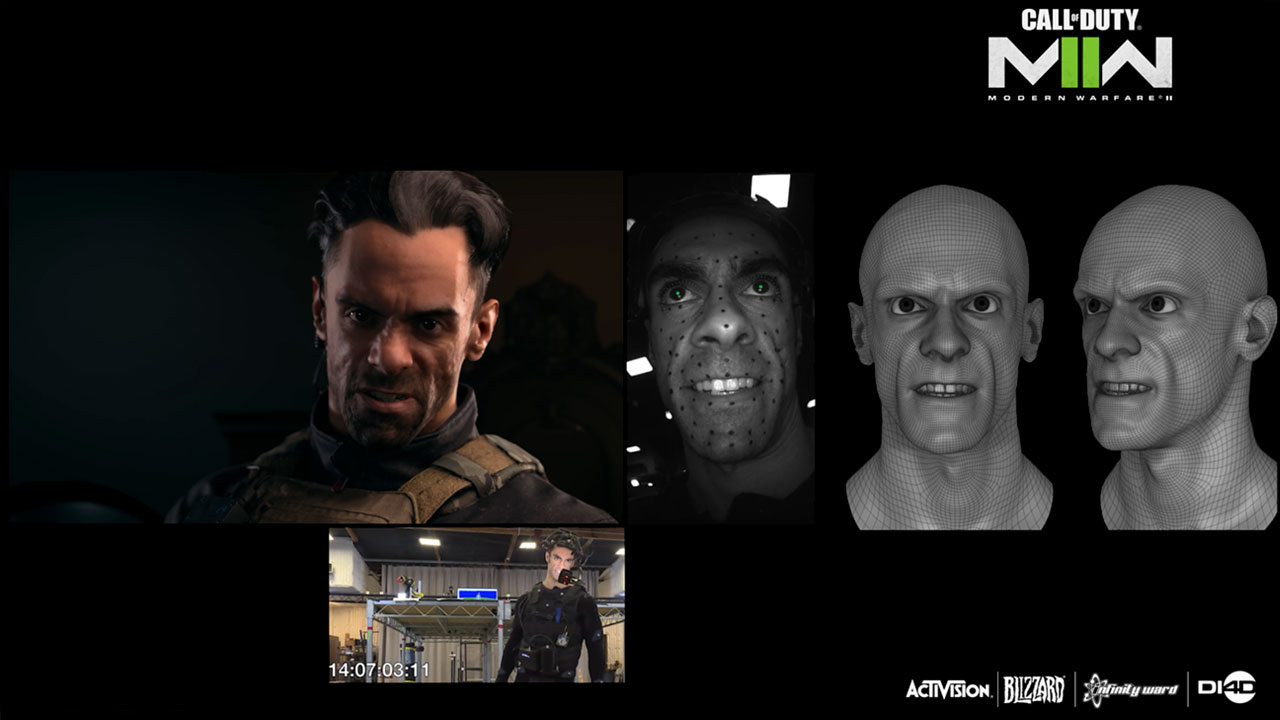

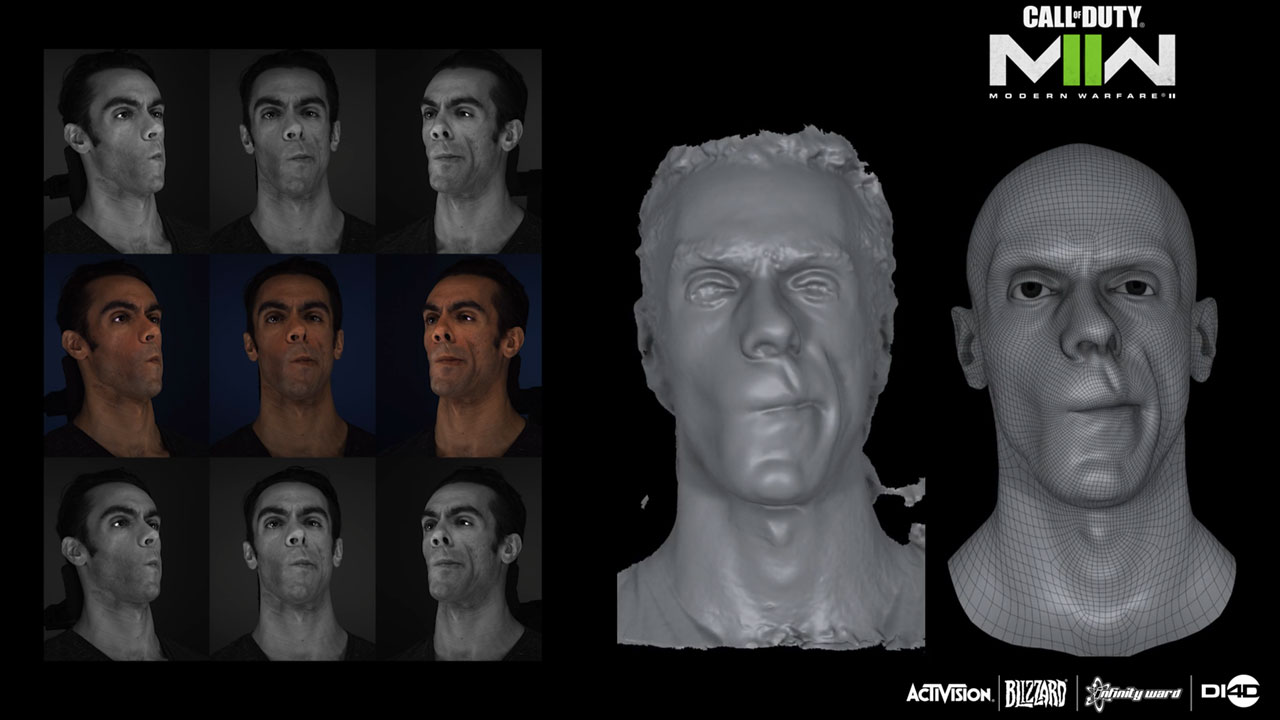

The 3D shape and appearance of all fourteen of the main cast was captured in super high detail using Activision’s Light Stage system. A Light Stage scan of each actor with a neutral facial expression was retopologized and used by Infinity Ward to infer automatically an initial FACS-based animation rig for that actor using its FaceGEN system.

“We usually just do one session in the light stage (per actor),” explains Bernardo Antoniazzi, Principal Technical Artist at Infinity Ward. “We capture up to 100 poses, the standard basically FACS, poses, and then on the same day, we also do a DI4D capture. We go from the neutral to the pose – so we have the full range of motion – of every kind of deformation. We rely on the 4D data to build the rig”.

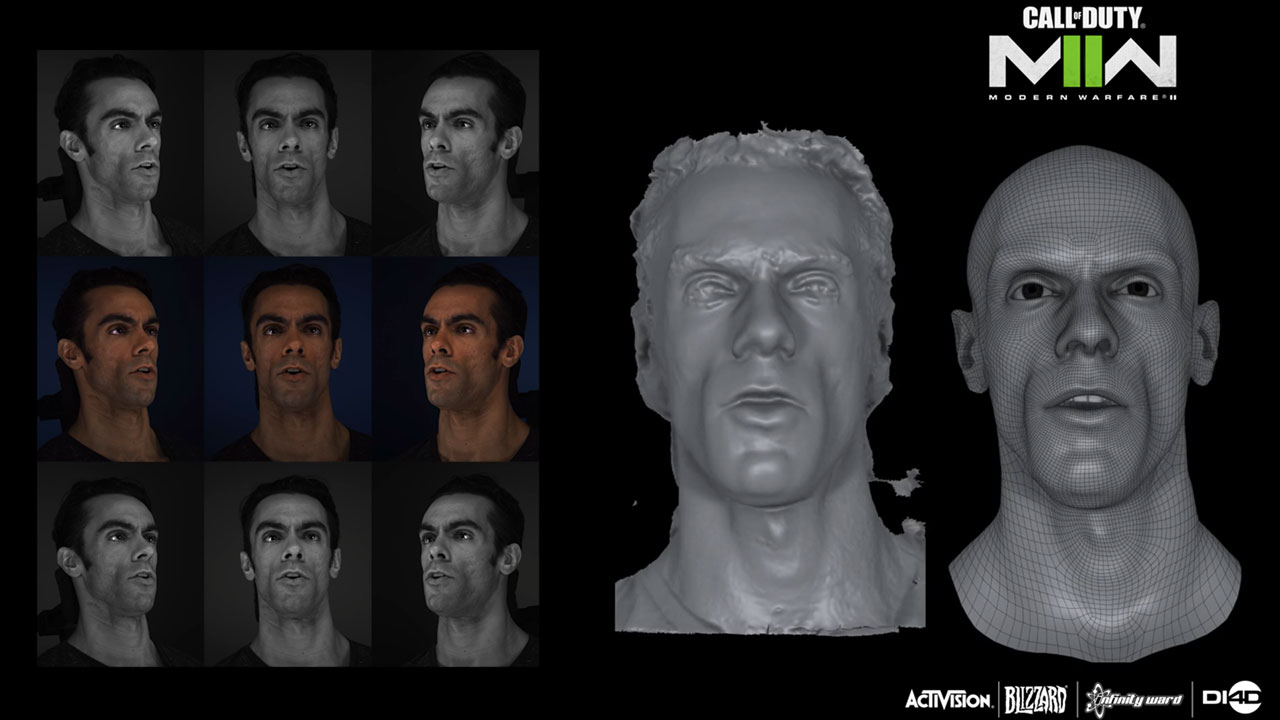

Next, we captured an extensive series of dynamic facial expressions and several phonetic pangrams of each of the main cast with a DI4D PRO system. Phonetic pangrams are phrases designed to include all the 40 basic sounds or phonemes in the English language. The DI4D PRO system is able to capture a large number of subtle ‘in-between’ dynamic facial expressions.

We processed the machine vision camera data first using photogrammetry to produce a raw 3D scan sequence, in which a separate 3D scan is reconstructed for every frame of the video data. The retopologized neutral expression mesh from the Light Stage was then registered precisely to one frame of the raw 3D scan sequence data in which the actor has a similar expression to the light stage neutral. The registered mesh was then tracked accurately through the whole 3D scan sequence using optical flow to apply a consistent mesh topology to every frame in 3D scan sequence. This results in 4D data that is represented as dense point cache animation.

Infinity Ward used the DI4D PRO data to supplement and refine the initial FACS-based rig they created for each actor. This approach to facial rig creation resulted in a huge time saving compared to a traditional rigging process involving sculpting 100s of facial shapes.

Driving digital doubles with high fidelity, in-game facial animation

For every scene, facial performance data was captured using Head Mounted Camera (HMC) systems simultaneously with the audio voice performance and body motion capture. The simultaneous capture of all of the elements of performance from the same actor was important to achieve accurate synchronization and the high degree of realism the team that were after.

Our pipeline puts greater emphasis on capturing the straight performance as opposed to then adjusting it in post, which is especially important given the volume of the work the team were trying to do. The actors also respond well to this workflow, knowing that their performance will be faithfully reproduced in the final game.

DI4D processed hundreds of selects made from the HMC footage using its new proprietary PURE4D solution. PURE4D combined the actors’ facial performance data captured with HMCs during PCAP with the higher fidelity dynamic expression data captured with the DI4D PRO system. PURE4D also uses advanced machine learning algorithms to reduce significantly the amount of manual clean-up normally associated with processing 4D data, hence allowing 1,000s of seconds of high-quality facial animation data to be delivered in a timely manner.

Three weeks of modeling, with PURE 4D, now takes one day. In addition, facial animation that had previously taken two weeks can now be done in three days. Infinity Ward found that the new method removed any subjective interpretation and allowed the artist to focus on making meaningful improvements rather than just fixing problems. It reduced the amount of clean-up and increase the accuracy of the final defamations.

The machine learning (ML) was particularly of interest. The team used of series of blend shapes encoded into a lower dimensional space representing the bones and linear blend skinning. This method of blend shape compression allows for efficient performance while also maintaining high fidelity in the game engine. Blend shapes are expensive to run and do not scale with respect to multi-platform deployment (- with the appropriate level of fidelity), especially on low-end platforms when you have facial rigs that have over 300+ high-resolution blend shapes.

“DI4D technology allowed us to implement incredibly realistic facial animations in a way that saved us a lot of time in the production process,” commented Sean Comer, Principal Machine Learning Engineer, Infinity Ward. “This meant we could focus on other important aspects of the game’s development without sacrificing the level of immersion and detail we were aiming for.”

The type of point-cache animation generated by PURE4D is able to faithfully represent an actor’s facial performance with a very high degree of accuracy, but it can be very heavy for in-game use and difficult to edit manually. Infinity Ward addressed this by solving the point-cache animation to their rig’s animation parameters.

This process introduced semantic control allowing an animator to modify the performance, giving final creative control to the animation team. However, because the rig and PURE4D data were both derived from the same set of DI4D PRO data, it was still possible to obtain a rig solve that was objectively very close to the point-cache animation and hence to the actor’s original performance. The rig-solve process also allowed the memory footprint of the animation to be scaled down for in-game use on a variety of hardware platforms.

View a presentation from the Games Developer Conference March 2023; The Facial Animation Pipeline of ‘Call of Duty: Modern Warfare II’ (Sponsored by DI4D). Hear from Bernardo Antoniazzi and Sean Comer of Infinity Ward around the methods for achieving realistic and nuanced digital doubles for Call of Duty Modern Warfare II using DI4D’s proprietary technology.