Using DI4D Training Data with Machine Learning – Facial Capture for Digital Doubles

Machine learning is being used increasingly to improve the fidelity and realism of audio-driven facial animation and video-driven facial animation applications. However, the success of a machine learning approach is critically dependent on the quality and consistency of training data used.

At DI4D, we specialize in driving lifelike animation for digital characters, or ‘digital doubles’: exact, computer-generated replicas of real-life people. High-fidelity facial animation of real-life actors acquired using our DI4D PRO system is also ideal for use as training data for machine learning for several reasons: 1) DI4D PRO data faithfully represents the animation of a real-life person and is not subject to subjective artistic interpretation, 2) sophisticated sub-pixel tracking technology is used to ensure that DI4D PRO data is highly consistent from frame to frame and sequence to sequence, 3) using DI4D PRO, it is possible to quickly and conveniently acquire many minutes of high fidelity facial animation from subjects with a range of ethnicity and age, 4) DI4D PRO produces point cache animation that is not constrained by the limitations of a particular facial animation rig.

Last year, we launched PURE4D, the world’s most precise pipeline for digital double facial animation. PURE4D makes high-fidelity facial animation simpler and smarter and has unprecedented scalability to meet the enormous scale and precise details required in next-gen video games. PURE4D utilises machine learning technology as part of its pipeline in order to deliver these results.

Machine learning will no doubt play a role in perfecting digital doubles. Nvidia and Remedy Entertainment have previously explored how deep neural networks can be used to create high-fidelity digital doubles more efficiently than other methods, using a pipeline based on our DI4D PRO technology as part of the research.

“Due to humans’ innate sensitivity to the slightest facial cues, it is difficult to surpass the uncanny valley, where an otherwise believable rendering of a character appears lifeless or otherwise unnatural.”

NVIDIA

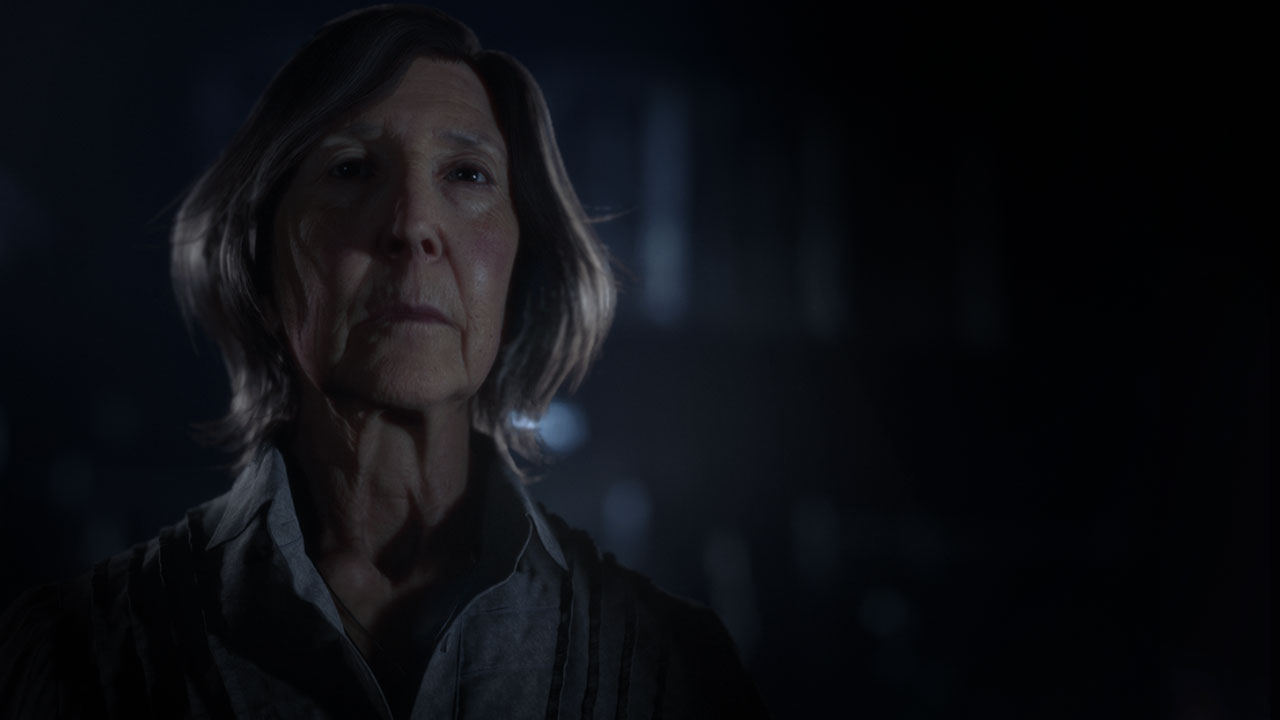

DI4D PRO training data has also been used to deliver detailed and nuanced facial animation in movies such as Morbius and video games such as the Quarry through Digital Domain’s ‘Masquerade’ pipeline. Phil Cramer, Animation Director for Digital Domain, explains that the training data helped them achieve the best quality result.

“It allowed us to have the most accurate representation of our actors in motion. This was a critical step for us to understand how each pore moved. We utilized this data as training material for our Masquerade pipeline. In turn, we are able to output identical data via the very low-end head-mounted cameras the actors are wearing in each shot. This enabled us to provide the best quality of data on the go.”

Phil Cramer – Animation Director, Digital Domain

At DI4D we are able to support R&D projects by providing training data for machine learning. Our flexible and tailored approach means that we can shoot on location or support projects from our own studio. If you are not sure of your specific requirements but would like to get some advice on how our training data can help, we’re more than happy to share our knowledge and advice.